Do you really need one massive machine learning model to solve every business problem?

Machine learning helps computers learn from data, recognize patterns, and make predictions without being programmed step by step.

Many businesses assume they need one large, complex model to handle everything. But large models can become heavy, expensive, and difficult to manage.

That's where micromodels in machine learning come in.

Micromodels in machine learning are compact and specialized models built to handle a narrowly defined task. Instead of trying to capture an entire system, they isolate specific components or data subsets. They deliver precise and actionable insights where they matter most.

So, if you're planning to adopt machine learning that is easy to manage and better adaptable than micromodels, then that is all you need.

In this guide, you'll understand:

- What are micromodels in machine learning

- Key characteristics

- Applications of micromodels

- Micromodels vs macromodels key difference

- Benefits and limitations

- Steps to build and integrate them effectively

By the end of this guide, you'll know exactly the role of micromodels and how you can implement them in your business.

So, without any further delay, let's dive in!

What Are Micromodels in Machine Learning?

Micromodels in machine learning are compact and specialized predictive models built to solve one clearly defined task within a larger system.

Instead of trying to model an entire process, they focus on a specific component, interaction, or subset of data.

They are trained on limited and relevant data. Because they are compact, they need less computing power, train faster, and give quick results. This makes them easier to manage and update compared to large, general models.

Micromodels are often used as parts of a bigger AI setup. Each one handles a specific job, such as analyzing a certain data segment, optimizing a machine component, predicting traffic flow in an area, or supporting automated data labeling.

In simple terms, micromodels break complex systems into smaller, manageable pieces so businesses can get precise and practical insights.

Key Characteristics of Micromodels

- Highly specialized: Designed to solve one clearly defined task. For example, detecting a specific defect or identifying a particular data pattern. This narrow focus improves accuracy within that task.

- Trained on small, focused datasets: They rely on carefully selected and relevant data rather than massive datasets. Because the scope is limited, they perform well without needing large-scale training data.

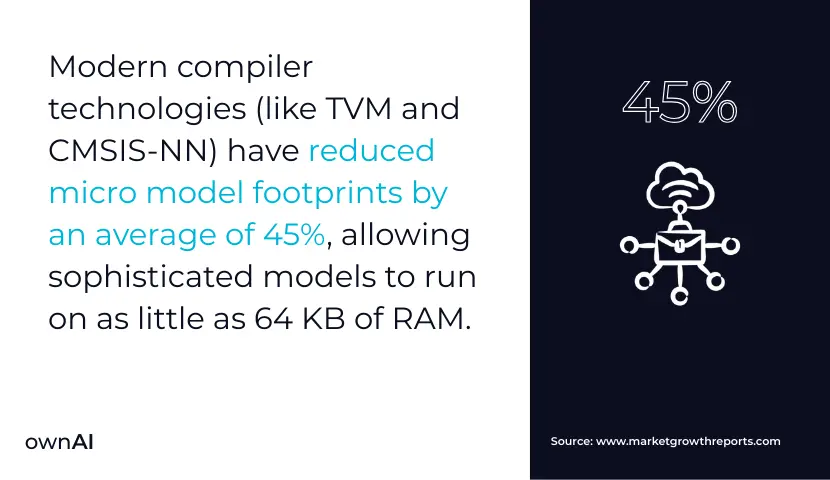

- Large-scale consumption: Built with fewer parameters, which means they require less memory and computing power. This makes them suitable for edge devices, IoT systems, and environments with limited infrastructure.

- Precision-focused training: They can be trained very closely on a narrow dataset to master a specific task. This focused training improves performance within its defined boundaries.

Applications of Microamodels in Machine Learning

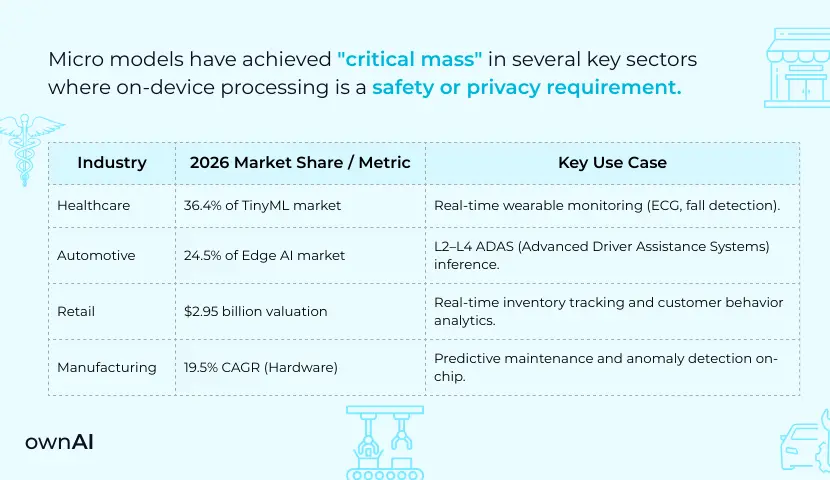

Micro models are used across different industries to solve focused and practical problems. Here are the most important applications:

1. Smart Devices and IoT Systems

Micro models run directly on smart devices and sensors. They support tasks like voice activation, gesture detection, activity tracking, and health monitoring. Their compact design allows fast responses without heavy infrastructure.

2. Factory Machines and Maintenance

In manufacturing, micro models monitor specific machine parts. They analyze sounds or vibrations to detect problems early. This helps prevent breakdowns, reduce downtime, and improve productivity.

3. Healthcare and Medical Devices

Micro models are used in specialized medical tools. For example, hearing aids use them to filter noise and enhance speech clarity. Wearable or in-ear devices can monitor breathing patterns. Their narrow focus ensures accurate and reliable performance for each task.

4. Traffic and Urban Planning

Cities use micro models machine learning to predict traffic flow in certain areas. This helps manage congestion and improve road planning. The goal is smoother movement and better city management.

5. Environmental Monitoring

Battery-powered sensors use micro models to track air quality or support wildlife monitoring. Because these models require less power and computing capacity, they are suitable for long-term use in outdoor environments.

6. Visual Detection Systems

Micro models handle specific tasks like identifying objects or sorting products in automated systems. They focus on one job and perform it accurately.

7. Process Improvement Through Data

Micro models can study how certain environmental factors affect systems. This helps organizations understand patterns and improve performance step by step.

Benefits of Using Micromodels in Machine Learning

Here are the top five benefits of using micromodels in machine learning:

1. Higher Accuracy Through Narrow Focus

Micromodels are built to solve one clearly defined problem. Because they are not trying to manage multiple objectives at once, they can analyze a specific data subset in depth. This focused approach often leads to more precise predictions and better performance within that defined scope.

2. Strong Efficiency and Faster Results

Due to their compact structure, AI micromodels require less memory, processing power, and energy. They also provide fast inference, which means results are generated quickly. This makes them practical for IoT systems, mobile devices, and edge environments where heavy infrastructure is not available.

3. Faster Development and Iteration Cycles

Micromodels can be trained and refined in shorter time frames compared to large-scale models. Their limited scope allows teams to experiment, validate, and improve performance quickly. This supports rapid prototyping and enables organizations to move from idea to deployment with less delay.

4. Scalability Through Modular Integration

Micromodels can be combined within a larger AI system, where each model handles a specific function. This modular structure allows businesses to expand capabilities step by step. Instead of rebuilding the entire system, new micromodels can be added as needs evolve.

5. Easier Management and Clearer Communication

Because micromodels are focused and less complex, they are easier to monitor, update, and maintain. Their outputs are also easier to explain to non-technical stakeholders. This improves transparency, builds trust in decision-making, and simplifies long-term system management.

Drawbacks of Micromodels in Machine Learning

Here are some major drawbacks of micromodels in machine learning:

1. Narrow Perspective Can Miss the Bigger Picture

Micromodels are designed to focus on one small part of a system. While this improves precision, it can also limit visibility. They may overlook broader trends, hidden interactions, or system-wide dependencies. In complex environments, relying only on isolated models can lead to incomplete understanding and less informed decisions.

2. Limited Ability to Generalize

Because micromodels are trained on specific data subsets, they often struggle when exposed to new or slightly different data. They perform well within their defined boundaries but may not adapt easily to changing conditions or broader datasets. This makes them less flexible outside their original purpose.

3. Higher Risk of Overfitting

Training on small and highly focused datasets increases the risk of overfitting. The model may learn patterns that are too closely tied to the training data, including noise. As a result, performance can decline when applied to real-world scenarios beyond the original dataset.

4. Integration and Coordination Challenges

In larger AI systems, multiple micromodels must work together. Coordinating data flow, ensuring consistency, and avoiding conflicts between models requires strong technical infrastructure and management strategies. Without proper planning, system complexity can increase quickly.

5. Growing Maintenance Complexity

Each micromodel requires monitoring, updates, validation, and alignment with business goals. As organizations deploy more models, the total maintenance effort increases. Managing many small models can demand continuous supervision and dedicated resources to keep the system stable and effective.

Micromodels vs Macromodels: Key Differences

Here’s the key difference between micromodels and macromodels:

| Micromodels | Macromodels |

|---|---|

| Built to solve one specific problem | Built to solve many problems at once |

| Use small and focused datasets | Use large and wide datasets |

| Give detailed results for a narrow area | Give general results across the whole system |

| Need less computing power | Need powerful systems like cloud or GPUs |

| Train and update quickly | Take more time to train and improve |

| Easy to manage and explain | Harder to manage due to size and complexity |

| Can be combined with other small models in one system | Usually works as one large unified model |

When to Choose Micromodels?

- Choose micromodels when you need accurate results for a specific task, want faster deployment, or are working with limited computing resources. They are also useful when you prefer building systems step by step.

When to Choose Macromodels?

- Choose macromodels when you need to analyze large amounts of data across many areas or want one system to handle broad and complex tasks.

6 Steps to Create Micromodels in Machine Learning

Here's the step-by-step process to build micromodels in machine learning:

Step 1. Define a Precise Business Objective

Start with one clearly defined problem. Do not try to solve multiple goals at once.

Pick one specific task. For example, monitoring a single machine component, analyzing one traffic zone, or detecting one type of defect.

The objective should be measurable and practical. When the scope is narrow and clear, the model becomes easier to design, train, and evaluate.

Step 2. Collect and Prepare Relevant Data

Now, collect only the data that directly supports the chosen objective. The dataset should be clean, accurate, and relevant.

This stage includes removing unnecessary inputs, handling missing values, normalizing data, and selecting useful features. Since micromodels rely on smaller datasets, data quality becomes critical for performance.

Step 3. Select a Lightweight and Appropriate Modeling Technique

Choose a modeling method that matches the complexity of your task. The goal is efficiency, not unnecessary complexity. Select an approach that balances performance, interpretability, and resource usage. A micromodel should run smoothly even in limited computing environments.

Step 4. Hire an Experienced AI Development Partner

Building a micromodel is not just about training an algorithm. It requires proper architecture planning, a validation strategy, and smooth system integration.

Hiring an experienced AI development company like ownAI ensures the model aligns with your business goals, fits within your larger system, and remains scalable over time.

Step 5. Develop, Test, and Refine the Model

Now train the model using your prepared dataset.

Validate it using proper evaluation metrics. Check accuracy. Look for signs of overfitting.

Because micromodels use smaller datasets, you can iterate quickly. Adjust parameters. Test again. Refine until performance is stable and reliable within the defined scope.

Do not rush this step. Precision is the strength of micromodels.

Step 6. Deploy and Integrate Within the Larger System

Once validated, deploy the model on the target platform. This could be an internal system or an edge device.

Ensure it integrates smoothly with other components if it is part of a larger architecture.

After deployment, continue monitoring performance. Update the model as new data becomes available or business conditions change.

Micromodels are not one-time builds. They require ongoing alignment with your objectives.

That’s it! You’ve successfully built micromodels for your business.

Why Choose ownAI to Build Micromodels in Machine Learning?

Micromodels work only when they are built with clarity. A poorly scoped or poorly integrated micromodel can create more complexity instead of solving problems.

That is why businesses choose ownAI to build micromodels.

We first define the exact problem that needs to be solved. If a micromodel does not deliver clear and measurable value, we do not recommend building it.

Here is what you can expect when working with ownAI:

Business First Approach

Every micromodel is linked to a measurable outcome. We focus on real impact, not experiments.

Clear Scope and Strong Architecture

Each model is designed to fit smoothly within your larger system. No technical conflicts later.

Efficient and Production Ready

We use the right lightweight methods, prepare clean data properly, and validate thoroughly. Models are built for real-world use.

Scalable and Modular Setup

You can add new micromodels as your needs grow. There is no need to rebuild the entire system.

Ongoing Monitoring and Optimization

We continuously track performance and keep your models aligned with evolving business goals.

Ready to build your micromodels?

Book a free consultation with our AI experts and get a practical plan that truly works for your business.

Conclusion

Micromodels in machine learning show that focused solutions often deliver better business value than large, complex systems. They solve one clear problem, use less data, require fewer resources, and are easier to manage and scale.

When planned and integrated properly, micromodels help businesses achieve precise results without unnecessary complexity. The key is clear objectives, clean data, and strong system design.

We hope this guide helped you understand what are micromodels in machine learning, their benefits, limitations, and how to implement them effectively.

If you are ready to build micromodels that deliver real results, hire experienced AI developers and let professionals build your micromodels smoothly.

FAQs

1. What are micromodels in machine learning, and why should businesses care?

Micromodels are small, task-focused AI models built to solve one clearly defined problem inside a larger system. Businesses should care because they reduce cost, speed up deployment, and deliver precise results without the overhead of large, complex AI systems.

2. When are micromodels a smarter choice than large AI models?

Micromodels are a better choice when you need accuracy for a specific task, faster implementation, or lower infrastructure cost. If your goal is to improve one function, not build a massive general system, micromodels are often more practical and efficient.

3. Do micromodels really need less data and computing power?

Yes. Since they focus on a narrow objective, they are trained on smaller and more relevant datasets. They also require less memory and processing power, which makes them suitable for edge devices, IoT systems, and cost-sensitive environments.

4. Can multiple micromodels replace one large model?

In many cases, yes. Several micromodels can work together inside a larger architecture, where each handles a specific task. This modular approach allows businesses to scale gradually instead of depending on one heavy, complex system.

5. What are the biggest limitations of micromodels?

Because they focus on a narrow scope, they may miss broader patterns or system-wide relationships. They also require proper coordination when multiple models are used together. Without strong planning, management complexity can increase.

6. Are micromodels risky due to overfitting?

They can be, especially when trained on small datasets. That is why validation, testing on separate data, and iterative refinement are essential. Proper evaluation reduces the risk of the model learning noise instead of meaningful patterns.

7. How scalable are micromodels for long-term business growth?

Micromodels are highly scalable when built with the right architecture. New models can be added as business needs evolve, without rebuilding the entire system. This makes them suitable for phased AI adoption strategies.

8. Why is expert guidance important when building micromodels?

Micromodels may be small, but building them correctly requires clear scoping, high-quality data preparation, proper validation, and smooth integration. Expert AI teams ensure the model delivers measurable value and works reliably inside real operational systems.